Research and Commentary

- Introduction

- Observation Pool

- Factors/Metrics Used for Model Creation

- Statistical Reliability

- 2016 NBA Playoffs: Actual Outcomes vs Predicted Outcomes

- ’96 Bulls vs ’16 Warriors Best-of-7 Series: Who’s likely to become victorious? (Featuring an NBA Playoff Edition R Shiny Web User-Interactive App to release next week)

1. Introduction

As the season comes to a close, knowing which teams have the upper hand in a playoff series is important. Of course, key matchups play an enormous part of series outcomes, but there are ways to measure a team’s aptitude. These measurables can provide significant insight and provide reasonable likelihoods of one team overcoming another in a best-of-seven series.

For example, take a look at what ELO Rating and several other statistical models accomplish at FiveThirtyEight. It can forecast the probabilities of various scenarios, such as:

- Single Game Win Probability (for which we also have a Def Pen version)

- Playoff Participation Probability

- Championship Probability

- Point Spread

With this in mind, I set out to see how I could find ways to assess a team’s aptitude and how that translates in a playoff series. The goal was to create a metric that could potentially provide accurate predictions for this year’s playoffs and to correctly pick the number of games that it would take to win a series.

2. Observation Pool

In the model, I compiled 528 observations which come from every single playoff series since the 1981 NBA Playoffs. This is where the multinomial logistic regression model comes in. I ranked the outcomes of each observation according to a scale from one to eight. I chose one of the two teams that were involved and designated it as Team A.

Team A can experience one of eight outcomes:

- Losing the series 4-0

- Losing the series (4-1 or 3-0*)

- Losing the series (4-2 or 3-1*)

- Losing the series (4-3 or 3-2*)

- Winning the series (4-3 or 3-2*)

- Winning the series (4-2 or 3-1*)

- Winning the series (4-1 or 3-0*)

- Series Sweep

Team A’s Outcome (Outcome according to Team A’s perspective) is our dependent variable.

Disclaimer: Unfortunately, because the NBA’s first round was a best-of-five for a number of years, the probabilistic results that we will produce will be slightly altered. Because we cannot be sanguine that every 3-0 sweep in a Conference Quarterfinal would become a 4-0 sweep, I considered 3-0 as an equivalent to 4-1 (or, in other words, winning a series by 3 games or more). The same can be said for 3-1 and 3-2 Conference Quarterfinal defeats. These will be classified as if the outcome was (4-2 or 4-3). The inverse is true as well in this situation. The best-of-five series weren’t reflective of every observation, but the percentages of series predicted to last 5 games rather than 4-game sweep might be affected by the observation pool.

3. Factors/Metrics Used for Model Creation

I chose a plethora of independent variables to test initially (even some wild interactions between Team Average Age and Defensive Rating), but not all were helpful to the model’s development. I also tested the cohen-kappa scores, confusion matrices, mean absolute errors, etc., of most models with the programs R and Python in order to assess which would give us more consistent and reliable results.

Here were the variables I decided upon (each with a set of seven (k-1) corresponding coefficients):

- Seed (Team A’s Seed)

- Opponent Seed (Team B’s Seed)

- Homecourt (from Team A’s perspective) – Does Team A have homecourt advantage?

- Adjusted Ratings – these will allow for greater compatibility and explanatory power between teams from different years

- Team A’s Adjusted Offensive Rating: 100*ORTG/LeagueAverage – In retrospect, I may have tried to avoid potential collinearity instead by using the BPM method for assessing quality offensive production, with a non-league-adjusted formula, like: (1- Off.TOV%)*(TS%)

- Team B’s Adjusted Offensive Rating

- Team A’s Adjusted Defensive Rating: 100*DRTG/LeagueAverage – Difficult to get lucky enough (via unprovoked opponent poor shooting) to have an above average defensive unit. We’ll remain confident in defensive rating as a team metric.

- Team B’s Adjusted Defensive Rating

- Team A’s Adjusted Offensive Rebound%: 100*ORB%/LeagueAvgORB%

- Team B’s Adjusted Offensive Rebound%

- Team A’s (Adjusted FTr + Adj. 3PAr): (100*(FTr/LeagueFTr + 3PAr/League3PAr)) – Here’s an interaction term with the vision to view teams with who take shots with higher PPS value (on average) as preferable. Adam Fromal from Bleacher Report & NBA Math pointed to this when identifying the top jump shooters in the NBA. When he adjusts player PPS according to the league-average PPS from select distances, he lists the average PPS values as of January 23rd: “0.820 from 10 to 16 feet, 0.802 from 16 to 23 feet and 1.074 on threes”. Because three-pointers are generally more valuable than long, midrange two-point jumpers, a team that can manufacture good shots could be more threatening. At the same time, we understand that volume does not necessitate marksmanship.

- Team B’s (Adjusted FTr + Adj. 3PAr)

- Team A’s (Adjusted Offensive TOV% – Adjusted Defensive TOV%)^2: Very messy interaction term which tested well but could be redacted during future refinements. Ideally, great turnover margins enhance win probability, but the square makes this term much more confounding. Thankfully, the corresponding coefficients are almost negligible upon review. This term will be revisited.

- Team B’s (Adjusted Offensive TOV% – Adjusted Defensive TOV%)^2

- # of Team A’s players who are top 10 in VORP: VORP is a very popular corollary of the BPM statistic. I actually like Dredge’s methodology much more, but for the sake of time and limited access (keeping the 528 observations in mind), I rolled with VORP. There should be more objectivity with this rank than if we were to arbitrarily find thresholds for stardom and classify players accordingly.

- # of Team B’s players who are top 10 in VORP

- Team A Consecutive Playoff Appearance – Playoff pedigree notion

- Team B Consecutive Playoff Appearance

4. Statistical Reliability

To ensure that the model was reputable, I used the variable inputs from every playoff series and coefficients to create an array of “predicted” outcomes (which are essentially reassessed matchups). Then I used R to test a few metrics so that we’ll know how accurate we expect our predictions to be.

- Accuracy: .3485 (%series winner and outcome are both correct)

- Unweighted Cohen-kappa estimate: .24 (indicates more chance agreement, byproduct of the many categories)

- Mean Absolute Error: 1.354 (slightly misleading error value*)

Frankly, because “accuracy” would indicate that the result predicted is the exact same as the actual (error = 0) and there are 8 possible outcomes, we welcome this result.

Furthermore, the mean absolute error suggests that, on average, we’ll miss our mark by 1.3 games. It’s important to note that the predicted result is simply generated from which outcome is considered the most likely (the one with the highest probability). The probabilities don’t always compute as if there’s one maximum value which is accompanied by concave down behavior. (In other words, if outcome 5 is most likely, it’s not a given that outcome 4 & outcome 6 are the next most probable outcomes.) And, if we check a graph of the 2011 Chicago Bulls/Miami Heat ECF, we can see that there are times which the model considers the most likely result to be somewhat obscure:

Probabilities ranked:

- Chicago sweep, 24.72%

- Chicago in 6, 15.61%

- Miami in 6, 13.3%

- Miami in 7, 12.98%

Moreover, Miami was 41.7% likely to win this series. While our prediction was a sweep, the probabilities didn’t provide overwhelming support. This spawns a large absolute error value that, if recurrent, increases the value of the mean absolute error that we incur.

Are the NBA Playoffs a different beast? Yes, to an extent. And there certainly are factors like “returning from injury” and coaching changes that aren’t accounted for when creating a model.

5. 2016 NBA Playoffs: Actual Outcomes vs Predicted Outcomes

By taking a look at how the improbable 2016 NBA playoffs unfolded, we should gather additional insight about our predictive power.

Here’s a graph of all 2016 NBA Playoffs outcomes:

The model picked the correct team in 12 out of 15 series matchups. It also would’ve likely been correct on Los Angeles/Portland, but injuries tormented the Clippers. Additionally, team and series length were both correct 7 out of 15 times.

Mistakes

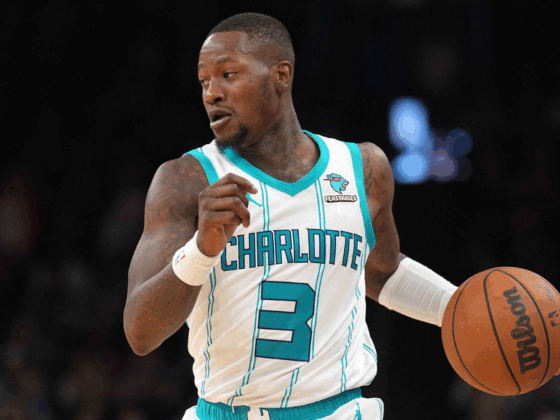

The underestimation about how prolific OKC could become defensively, as Kevin Durant became a part-time rim protector, was key to this mistake. Dion Waiters also made one of the more peculiar game-saving plays in NBA history.

Take a look at the predicted outcomes for this series that our model missed:

Outcome 3, which was the actual, was most likely among the OKC W outcomes but still only 14% likely, according to our model.

Cleveland over Golden State in the NBA Finals was simply one of the greatest upsets in recent memory.

Check out how the probabilities are accounted for, in this graph:

Here’s a glimpse of how exhilarating the Cavaliers’ comeback was.

“1” indicates being swept, a 28.39% chance; losing in 5, 21.8%; losing in 6, 11%; losing in 7, 16.76%; winning on the road in 7, 3.12%

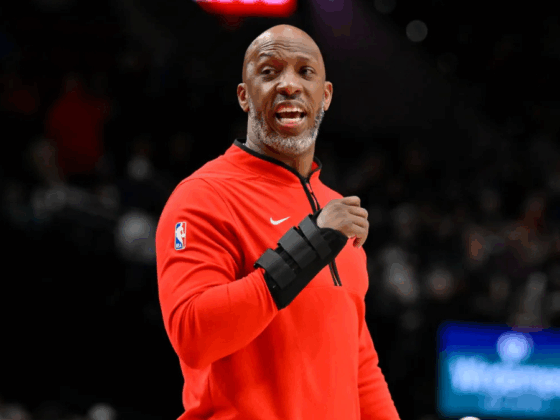

- “Warriors in 5” appeared to be a reasonable outcome for quite a while. From this altercation, you can sense a little exasperation from LeBron James (who normally avoids these imbroglios) and Co. “3-1” NBA Finals deficits had always been harbingers of doom.

Another angle of LeBron James and Draymond Green’s mid-play incident.#NBAFinals

(via @clippittv)pic.twitter.com/pglj8Hrv61— Def Pen Hoops (@DefPenHoops) June 11, 2016

Winning three straight is truly a testament to how splendid LeBron’s and Kyrie’s efforts were down the stretch; what focus!

With that being said, another upset of this magnitude seems unlikely (especially two straight seasons).The intention of using this model for the 2017 NBA playoffs and expectation of trustworthy results seems increasingly realistic.

6. ’96 Bulls vs ’16 Warriors Best-of-7 Series: Who’s likely to become victorious?

Now, for my favorite part: Given our model preparation with league-adjusted information, what would be the most likely outcome for a ‘96 Bulls vs ‘16 Warriors?

To do this, I used the appropriate statistics and assumed that Golden State (73-9) would get homecourt for the seven-game series.

Additionally, I created an interactive R Shiny web application, which allows for user inputs to bring forth new predictions, to seek the model’s resulting probabilities. It’s featured in the following screenshot. This predictive modeling application is more fun than the last, allows you to find likelihoods for any teams you’d like, and should be released next week.

Here’s a long look at the prediction, considering Chicago as Team A:

This model projects that Golden State would overcome the Bulls in 7 games; the best chance for the Bulls to break Golden State would come in 6, such that they could potentially close the series out at home.

What factors would likely lead to GSW in 7?

- Homecourt – It’s interesting to note that the model considers the Bulls more likely to win in 6 at home than to win on the road in a game 7. While that seems reasonable, we all know what transpired in Oracle Arena in a game 7 last June.

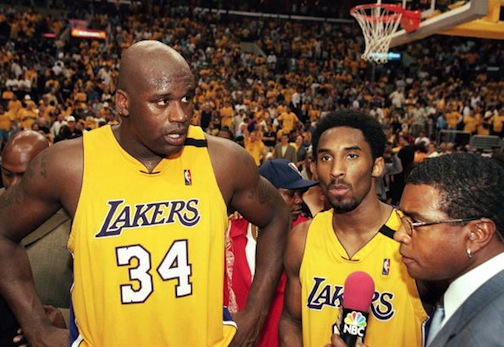

- Our Top 10 VORP measure: [Golden State – 2, Chicago – 1] – Value Above Replacement shows reverence for Draymond’s defensive playmaking. Golden State’s ability to switch all pick and rolls and use multiple defensive players on Jordan could benefit (not asserting that it would stifle Michael Jordan– LaVar Ball doesn’t play for the Warriors). Scottie Pippen is definitely a force to be reckoned with as well– this is something to ponder during future model considerations.

- Warriors’ offensive efficiency relative to league average was greater than that of the Bulls.

- Warriors’ 3PAr and prolific shooting from there – Seeking shots with greater average return could give them a scoring advantage over the Bulls. It’s no secret that Chicago would need prolific scoring (and likely, many three-pointers to keep up).

Questions for Golden State would include the following:

- How would the Warriors limit the Bulls’ offensive rebounding prowess? Chicago recovered 36.9% of their offensive rebounds during the 95-96 season. Keeping the implacable Dennis Rodman off the offensive glass would be very challenging. However, it’s worth mentioning that although Chicago’s offensive rebounding percentage was an entire 13.4% above GSW’s, the league average during the 95-96 NBA season was 30.6% which is absurdly high, relative to our current league.

- Who would accept the challenge of being Michael Jordan’s primary defender?

- Can GSW limit turnovers? Chicago was far above league-average in terms of forcing opponents turnovers, and they took care of the ball themselves at an elite mark. If Golden State is nonchalant, then the Bulls will likely punish them.

Rules with regards to physicality:

- Often times, when people refer to mid-90s physicality, they are insinuating that defenders were allowed to be so much more aggressive. Feast your eyes at the antics the Supersonics unleashed in order to inhibit Michael Jordan’s freedom whether he was on or off the ball. Maybe there were times in which the referees allowed them to get away with too much. In other words, even if the Warriors have to take time to adjust to the abrasiveness, they would likely get enough calls & foul shots to offset the mental hurdles that occasional hyper-physicality might incite.

-

Would Curry be completely naive to the concept of fighting for position? Probably not.

Was curry fouled on any of this? pic.twitter.com/HalRzAfFYH

— Stew (@Shake_N_Jake35) May 25, 2016

- Handchecking was eliminated in 1994-95. After 1997, a defender was no longer permitted to use his forearm to impede the dribbler (above the elbow/free-throw-line/high-post area). The NBA seems to have slowly progressed toward prioritizing an offensive player’s freedom of movement. However, some would argue that defenders didn’t truly adhere this rule until the mid-2000s. Because of this, many are concerned that if the series was played during Michael Jordan’s era, modern superstar players would be greatly compromised.

- The NBA had a briefly shortened 3-point line, until 1997-98. Could this have increased offensive efficiency league-wide? It’s hard to believe that the ’16 Warriors would struggle shooting from much closer. Conversely, we cannot “know” how the Bulls would perform under the circumstances in a game with modern regulations, but… yikes:

- Illegal Defense Guidelines & Absence of 3-Second Violation must be considered. Defenders were allowed to commandeer the paint rather than having to time their rotation correctly. Nevertheless, shrinking the floor may not have been a very viable option for the Bulls against the ’16 Warriors.

Considering all of these factors, I’m inclined to agree with our multinomial model that the ’16 Warriors should win in 7 games. And if the three point line is stretched to a distance that Pippen and Jordan are a little uncomfortable with, then maybe fewer than 7. Of course, there are other variables to consider that might sway me one way or the other.

Extra: For fun, I also wanted to try to determine what the scores would look like.

As we know, the battle for pace (‘16 Golden State, 99.3; ‘96 Chicago, 91.1) would be important to the scoring outlook of each game. Using our last web application, I sought for the likelihood that we’d see games with 220 total points or more.

If Chicago stays true to its pace, then it’s unlikely that we’ll see 7 games of high-scoring tallies. The hypothetical NBA Finals matchup could be a grind.